|

Training an instance segmentation might look daunting since doing so might require a significant amount of computing and storage resources.

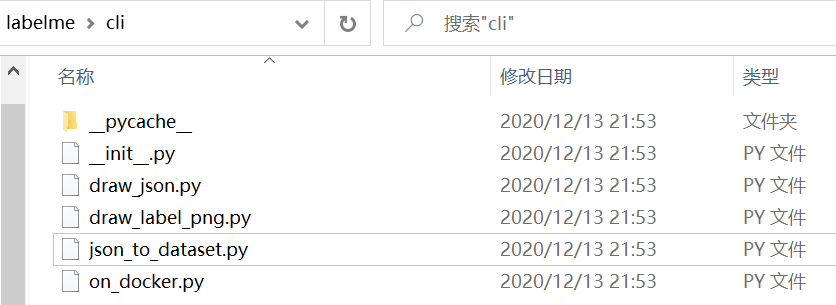

Also notice that for the simplicity and the small size of the demo dataset, we skipped the train/test split, where you can accomplish that by manually split the labelme JSON files into two directories and run the labelme2coco.py script for each directory to generate two COCO annotation JSON files. Here is the final prediction result after training a mask RCNN model for 20 epochs, which took less than 10 minutes during training.įeel free to try with other model config files or tweak the existing one by increasing the training epochs, change the batch size and see how it might improve the results. The notebook is quite similar to the previous object detection demo, so I will let you run it and play with it. To make it even beginner-friendly, just run the Google Colab notebook online with free GPU resource and download the final trained model. For instance segmentation models, several options are available, you can do transfer learning with mask RCNN or cascade mask RCNN with the pre-trained backbone networks. The framework allows you to train many object detection and instance segmentation models with configurable backbone networks through the same pipeline, the only thing necessary to modify is the model config python file where you define the model type, training epochs, type and path to the dataset and so on. If you are unfamiliar with the mmdetection framework, it is suggested to give my previous post a try - " How to train an object detection model with mmdetection". Train an instance segmentation model with mmdetection framework If everything works, it should show something like below. Then optionally, you can verify the annotation by opening the COCO_Image_Viewer.ipynb jupyter notebook. After executing the script, you will find a file named trainval.json located in the current directory, that is the COCO dataset annotation JSON file. Go ahead and install them with pip if you are missing any of them. The script depends on three pip packages: labelme, numpy, and pillow. To apply the conversion, it is only necessary to pass in one argument which is the images directory path. You can find the labelme2coco.py file on my GitHub. Those are labelimg annotation files, we will convert them into a single COCO dataset annotation JSON file in the next step.(Or two JSON files for train/test split.) Convert labelme annotation files to COCO dataset format Once you have all images annotated, you can find a list of JSON file in your images directory with the same base file name. I annotated 18 images, each image containing multiple objects, it took me about 30 minutes. When done annotating an image, press shortcut key "D" on the keyboard will take you to the next image. To finish drawing a polygon, press "Enter" key, the tool should connect the first and last dot automatically. When you open the tool, click the "Open Dir" button and navigate to your images folder where all image files are located then you can start drawing polygons. # python3 conda create - name = labelme python = 3.6 source activate labelme # or "activate labelme" on Windows # conda install -c conda-forge pyside2 # conda install pyqt pip install pyqt5 # pyqt5 can be installed via pip on python3 pip install labelme You can install labelme like below or find prebuild executables in the release sections, or download the latest Windows 64bit executable I built earlier. So anyone familiar with labelimg, start annotating with labelme should take no time. Labelme is quite similar to labelimg in bounding annotation.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed